Real-Time Data Integration for Midstream Pipeline Operations: Architecture Patterns That Scale

How modern data fabrics replace batch processing with real-time intelligence for pipeline operators.

Duke Mattoon

February 2026

9 min read

Duke Mattoon

February 2026

9 min read

Midstream pipeline operators are drowning in data from SCADA systems, flow computers, custody transfer meters, and ETRM platforms — yet struggle to turn that data into timely operational intelligence. This article presents proven architecture patterns for building real-time data integration that scales with operational complexity.

The Data Silo Problem in Pipeline Operations

A typical midstream operator runs dozens of disconnected systems: SCADA for operational control, ETRM for commercial management, separate measurement and allocation systems, accounting platforms, and ad-hoc spreadsheets filling the gaps between them. Each system holds a fragment of the operational picture.

This fragmentation isn't just an IT inconvenience — it directly impacts operational performance. When schedulers can't see real-time meter data, when accountants wait days for allocation results, when managers rely on stale dashboards, the entire organization operates at a fraction of its potential.

From Batch to Real-Time: The Architecture Shift

Traditional integration relies on nightly batch jobs: extract data from source systems, transform it through ETL pipelines, and load it into a data warehouse. This approach worked when decisions were made weekly or monthly. It fails when operations demand hourly or minute-level visibility.

Modern real-time architectures use event streaming (Apache Kafka, AWS Kinesis), change data capture (CDC), and API-driven integration to move data as it's created. The result is a unified operational view that updates in near-real-time, enabling proactive decision-making rather than retrospective reporting.

"Organizations that shift from batch to real-time data integration report 40% faster issue resolution and 25% reduction in unplanned downtime."

The Data Fabric Pattern for Energy Operations

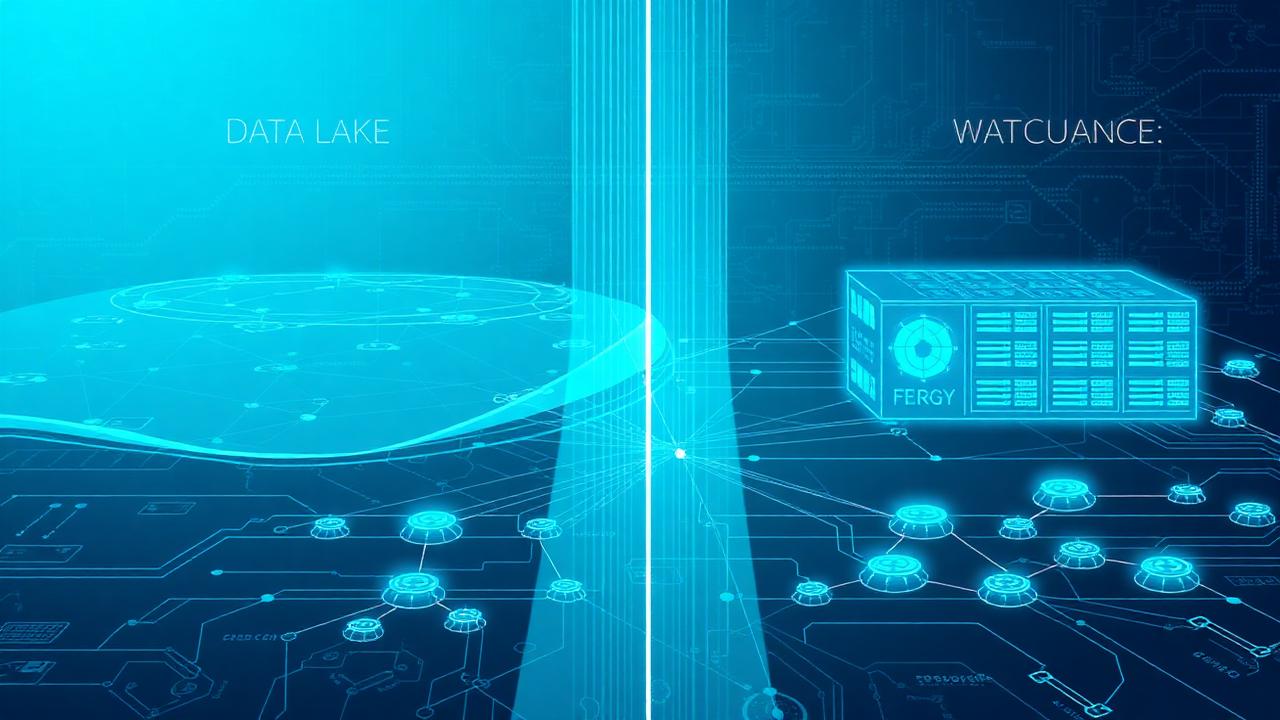

A data fabric provides a virtualization layer across all operational systems, enabling queries that span SCADA, ETRM, measurement, and financial data without requiring physical data movement. Think of it as a federated query layer with built-in governance and lineage.

For pipeline operators, this means a scheduler can see real-time meter readings alongside contract volumes and nomination status — all in a single view. An analyst can trace a volume discrepancy from the meter through allocation to settlement without switching between five systems.

Implementation Roadmap

Building a real-time data fabric is a multi-phase journey. Phase 1 focuses on event streaming infrastructure and critical data flows (measurement, scheduling). Phase 2 adds analytical capabilities and historical data integration. Phase 3 introduces AI-driven insights and predictive capabilities.

The key is starting with high-value, high-frequency data flows — typically measurement and SCADA data — and expanding outward. Each phase should deliver measurable value while building the foundation for the next.

Ready to implement these strategies?

Our team can help you assess your current capabilities and build a roadmap tailored to your operations.

Request a ConsultationRelated Articles

RightAngle ETRM Implementation Best Practices: Avoiding the Top 10 Integration Pitfalls

Energy Data Lake vs. Data Warehouse: Choosing the Right Architecture for Your Operations